Have you ever wondered how machines understand the nuances of human language? It all starts with tokenization, the foundational step in training language models to grasp our complex languages.

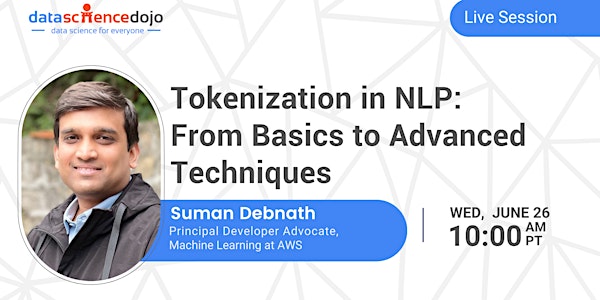

In this live session, you will learn the mysteries behind tokenization in natural language processing (NLP). From the initial challenges of segmenting text into manageable pieces to the sophisticated techniques that enable deeper language understanding, this talk is tailored for enthusiasts eager to deepen their knowledge and refine their skills in NLP.

Whether you're just starting out or looking to brush up on the latest in NLP, this session promises a blend of foundational knowledge and advanced insights, all presented in an accessible and engaging format.

- Understand tokenization's impact on language models.

- Learn text splitting for deeper analysis.

- Explore Byte Pair Encoding's efficiency.

- Discover sliding windows for better training data.

- Learn about converting tokens into vectors.